The U.S. power grid is undergoing a transformation. Operational focus is shifting away from utilities with large centralized generators at the transmission level and moving toward individual customers who contribute generation from distributed energy resources (DERs) at the distribution level. Utility-scale renewable generation, meanwhile, continues to span across both transmission and distribution networks.

The current interconnection process for small- to gridscale DERs is well-defined, time-consuming and complex. It involves studying every DER application within a network model to identify its impacts on the network, both locally and systemwide.

New technologies and applications are expanding the use cases for the capacity analysis approach, which is radically changing how interconnections are evaluated. Rather than focusing on individual DER connections, capacity analysis can allow you to study nearly every possible interconnection scenario. By identifying and understanding the ideal locations and usage times for DERs — and optimizing operating conditions — capacity analysis is making faster approvals and interconnections, as well as higher DER penetration, possible.

Read The White Paper

The U.S. power grid is undergoing a transformation. Operational focus is shifting away from utilities with large centralized generators at the transmission level and moving toward individual customers who contribute generation from distributed energy resources (DERs) at the distribution level. Utility-scale renewable generation, meanwhile, continues to span across both transmission and distribution networks.

The current interconnection process for small- to gridscale DERs is well-defined, time-consuming and complex. It involves studying every DER application within a network model to identify its impacts on the network, both locally and systemwide.

New technologies and applications are expanding the use cases for the capacity analysis approach, which is radically changing how interconnections are evaluated. Rather than focusing on individual DER connections, capacity analysis can allow you to study nearly every possible interconnection scenario. By identifying and understanding the ideal locations and usage times for DERs — and optimizing operating conditions — capacity analysis is making faster approvals and interconnections, as well as higher DER penetration, possible.

Hosting Capacity Analysis: How It Works

Hosting capacity refers to the number of DERs and load that each node on an electric distribution network can accommodate without updating existing infrastructure.

Data on a network’s hosting capacity provides useful insights to utilities wanting to integrate DERs into their systems. Creating network models and databases that can deliver accurate hosting results requires significant data preparation. That said, the payoff can still be significant, leading to a solid foundation in grid analytics, multiple efficiencies and better system understanding.

Hosting Capacity Analysis (HCA) is a tool used by utilities to plan for and approve DER connections to their systems. It works by determining the maximum load or generation that a distribution circuit can integrate without introducing new system violations or exacerbating existing ones.

This forward-looking analysis involves three well-defined engineering studies used in the interconnection process. The results of any of the studies can potentially impact the load or generation size that can be interconnected. They include:

Thermal/operating studies to assess cables, conductors, transformers, fuses, switches and other equipment with loading limits.

Voltage/power quality studies to evaluate a distribution circuit’s steady state voltage, voltage variation and power factor.

Protection studies to review substation breakers and relays, reclosers, fuses, and other components designed to protect against network damage.

Hosting capacity calculations are typically performed in a distribution planning model software tool such as CYME, Synergi or WindMil. These software programs make it possible to create simulations on each of a circuit’s nodes — that is, the spots where two or more line sections intersect — through an iterative process that looks for violations during each repetition of the simulation.

The software then typically calculates the hosting capacity for each separate study that is performed. The Electric Power Research Institute (EPRI) has also developed the Distribution Resource Integration and Value Estimation (DRIVE) software that can be fed circuit models and data to calculate hosting capacity.

How HCA Speeds the DER Approval Process

Regardless of which tool is used to conduct hosting capacity calculations and data visualizations, capacity analyses can help improve and speed up the DER planning and approval process. A simple example explains how.

To study the feasibility of a proposed community solar project using capacity analysis, the generator could be added to multiple locations along the feeder through an iterative process. Generation output values would be adjusted in iterative steps until violations appear.

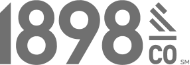

A potential violation or limitation could occur if the added generation exceeds the thermal capacity of the cable connecting to the project. Alternately, a violation could result by the high voltage seen when a feeder is lightly loaded due to minimum demand. The addition of large generators at the end of a circuit can also reduce the reach of substation relays or reclosers, inhibiting their ability to see the full downstream fault current. The existing settings could then fail to operate the breaker to clear the downstream fault. The generation hosting capacity at a given location is the minimum acceptable, given all possible constraints. The interconnection planners can then assess a variety of scenarios that may provide more cost-effective locations for these DERs.

The same methodology is used to calculate the hosting capacity for load. A load is added to the model, with its size increased in iterative steps until a limitation is met. This process could be used, for example, to assess the maximum electric vehicle charging load that could be added during feeder peak loading before thermal overloads or undervoltage conditions would be detected.

The most conservative hosting capacity will result when a capacity analysis considers a full year of hourly average demand profiles. Typically created from real SCADA (supervisory control and data acquisition) or AMI (advanced metering infrastructure) data, these profiles can also be simulated in cases where existing loading or generation data is not available.

When 8760 profiles (24 hours/day x 365 days/year) are used as input to the distribution planning model, all of the peak and minimum loading conditions a circuit has experienced over the year are represented. The hosting capacity analysis calculates the maximum generation or load at each node for every hour of the 8760 profile, producing a large set of results data. The final capacity value at each node is the minimum of the 8760 different results for that node and represents the worstcase scenario for loading conditions. This data provides utilities a quick outlook on their existing system capacity over a year.

Alternatively, 8760 profiles can be further aggregated into a variety of profiles that can address only peak and minimum demands. Using a smaller number of profiles can speed up processing time, but also provide less granular data, eventually limiting some of the possible use cases.

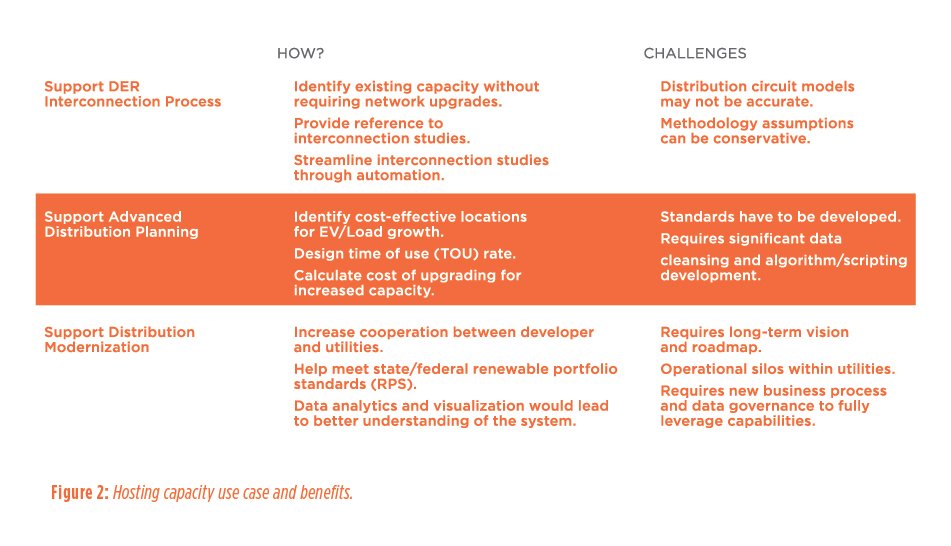

Hosting capacity study results can provide an unmatched level of granularity in identifying locations to install new generation or load. Load and generation capacity results can also be aggregated and color-coded to represent specific areas or parcels of land. This information can be published in online maps for possible review by interested consumers and developers.

DER Hosting Capacity: Use Cases and Benefits

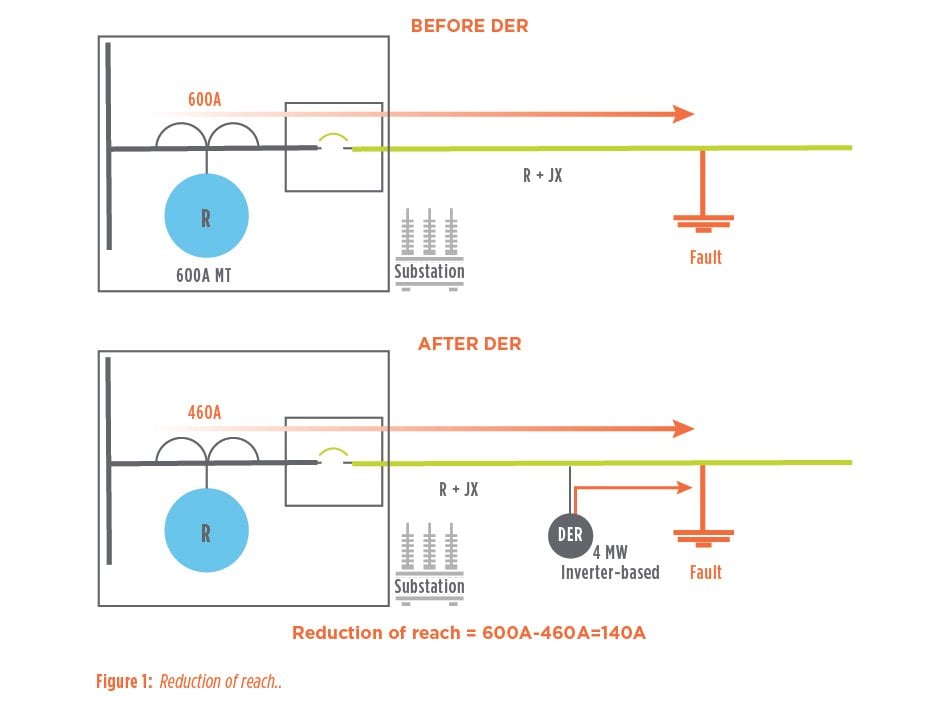

The benefits of capacity analysis are significant and wide-ranging.

It speeds response time. In addition to detailing locations to add generation or load, the HCA process can also be designed to include estimated interconnection costs. If, for example, an interconnection is planned at a location and capacity that may cause violations, capacity analysis can identify the limiting components and determine the upgrades needed to resolve the violations. New tools, such as python scripting, can be used to automate multiple processes. By comparing the upgrade requirements to compatible units or standards, utilities can quickly estimate interconnection project costs and rapidly provide developers siting and budgetary insights that are critical to time-sensitive projects.

It supports transportation electrification. Capacity analysis can also help guide the transition to transportation electrification. Load and profile modeling results can help utilities respond more quickly to the large load increases created by fleet vehicle charging, fast-charging systems and other new charging infrastructure.

It can be used to developed time-of-use rates. Likewise, 8760 profiles can be helpful in identifying critical peak scenarios where load can be shifted or curtailed to avoid costly system upgrades. Profile information can also provide crucial insight into time of use (TOU) and other rate designs. When used in combination with TOU rates, the locational impact data available through capacity analysis can help utilities offer pricing that more accurately reflects changing load patterns. The availability of this data is expected to drive collaboration between developers and utilities by supporting efforts to meet government-mandated Renewable Portfolio Standards (RPS) without curtailing excess renewable generation.

It improves asset monitoring and operational efficiency. Capacity analysis is in many ways like a fitness tracker for the grid, enabling utility operators and engineers to monitor the grids performance in near real time. They may find, for example, that a circuit has regulators or capacitors that switch twice as often as the other circuits in a substation, alerting operators and engineers to a possible maintenance issue. Or they may find a substation that has significant capacity to add new generators, which could avoid a costly transformer replacement for load growth down the road.

It can also provide insight for decision-making. For example, such data makes it possible to compare the benefits of building additional capacity in a parallel circuit versus reconfiguring neighboring circuits to operate more efficiently and free up capacity. Add cost-estimating functions, and utilities achieve a holistic approach to generation interconnections. Gaining access to this level of data provides planners and engineers more insight on how the system operates today and allows them to make data-driven, cost-effective decisions for tomorrow.

It makes near real time, look-ahead planning possible. As utilities collect more SCADA and AMI data, hosting capacity and distribution planning can potentially be performed in near real time and become the backbone of distribution system operations.

In this scenario, near real time SCADA and AMI data become inputs to distribution system models that accurately represent current system conditions, providing visibility into equipment operating status, load and generation. With this information imbedded, operators will be able to create models that not only calculate near real time hosting capacity, but also run countless other simulations and scenarios to determine the system’s optimal operating condition and to make corrective decisions to adjust to demand and generation. When used in combination with algorithms, this data can completely change traditional planning and operational methods. With granular, near real time data and algorithms, engineers and operators will be able to forecast energy demand more accurately, optimize system operation and maximize the penetration of DERs.

DER Hosting Capacity Challenges

While capacity analysis offers limitless possibilities, regulatory differences across the country may affect how utilities choose to implement it. Each utility will face its own limitations related to its operational systems, planning tools, data warehouse software or other factors. Standardization of capacity analysis, as a result, may prove challenging.

Standardization limits, however, give individual utilities the flexibility to design a capacity analysis system that directly addresses their unique challenges and goals. Python scripting and automation of typical engineering verifications allow for quick algorithm development, which can be tried and tested before being implemented in an enterprise solution. As datadriven solutions become the rule, rather than the exception, the quality and completeness of utility data become increasingly important. Significant data collection, correction and maintenance will be required for any utility looking to modernize its distribution planning processes.

Load profiles, both at the substation and customer level, 8760 or otherwise, will become essential inputs to circuit simulation models. Currently, this data is often either unreliable or missing. Process automation and scripting can significantly reduce time and resources required to provide usable circuit models. Statistical modeling can help fill dead bands or missing data for SCADA values coming from substation-level demand data. Similarly, load allocations with customer AMI data can provide utilities reasonable approximations to replicate real-world loading scenarios. As more sensors are added to distribution networks, these approximations will grow closer to accurate realworld conditions.

Typically, a capacity analysis is performed on a circuit in “normal operating configuration,” which represents the typical circuit configuration at a given point in time.

Distribution systems often change throughout the year in accordance with safety and operational needs. SCADA data used to create 8760 profiles captures these changes. An analysis run on a normal operating configuration, however, must compensate for these changes to model a “normal” system more accurately. Changes and abnormal conditions are often difficult to plan around and require complex solutions. Profile cleansing techniques that cater to these specific challenges are needed to calculate hosting capacity values accurately. With automation tools, these cleansing techniques can be adapted to changing network complexities.

The technical challenges for capacity analysis are significant, such as data collection hurdles within a utility’s many departments and the conflation of data sources. If these challenges are overcome, multiple groups within a utility will benefit from the ability to perform detailed analysis on accurate data. Capacity analysis, therefore, becomes a key component of a larger grid and utility modernization plan.

Looking Ahead

The technologies that will shape future distribution planning hold great promise, but many hurdles must still be overcome before capacity analysis benefits can be truly realized by utilities and external stakeholders.

Hosting capacity results can be used by utilities and developers to site new products more efficiently and effectively. These projects will support efforts to develop an integrated grid with power flows in any direction. The new capabilities will help cities, developers and utilities work more closely together to implement stronger, smarter and more sustainable systems.

Cities that emphasize the need for reliable clean energy and new technologies can improve the lives of the biggest beneficiary of all — a utility’s customers